print("Say hello to our trio of authors: two curious humans and an Artificial Intelligence, \

all equally passionate about the fascinating world of MLOps!")

Hold on 🖐️ that was ChatGPT getting ahead of itself!

Who are we 🥚🐣

This preface is the only section written entirely us. Otherwise, we mostly played the part of prompt engineers and reviewers to our co-author 🚀

Gauthier and Yashas are Dataikers by day and perpetual seekers of knowledge at all times. We began writing this book as Developer Advocates in early-2023. ChatGPT's release meant AI, a buzzword from our industry, invaded everyday conversation and public consciousness. We started to explore its intricacies and absurdities by generating a book on a familiar topic: operationalizing models, or MLOps for short.

What's in the book for the reader 🎁📖

Our experiences helping teams apply Machine Learning and automation inspired this book. We've worked with public organizations and private-sector companies globally, from among the largest ones to others at the startup stage. We wanted to translate our prompts insights about the technical aspects of MLOps into plain English.

We also hope reading generative AI content is as enlightening as writing it. The book might give you a glimpse into the future of AI and its place in our world. Whether you're in a career navigating the waves of digital transformation, an educator looking to understand its applications or a policymaker grappling with its many societal implications!

Who is our co-author 🤖🦾

Directing ChatGPT, a worthy third author, was a unique challenge. We had to nudge it frequently in the right direction. Common concerns regarding AI-generated content are hallucinations and inaccuracies. Our biggest challenge was steering it to write precisely, with the right details and the intended tone.

For example, ChatGPT had an interesting take on its abilities for the Preface:

With an appetite for data and a knack for number crunching, AI helps bring a unique perspective to this book. While AI might not be able to play the guitar or enjoy a good cup of coffee, it compensates by devouring gigabytes of data for breakfast and generating insights at lightning speed!

I will never have time to read another MLOps book 🧠

AI can definitely make music. And maybe soon, it can discern good coffee from bad--for breakfast! However, it can't yet be as creative as humans and in an intentional way. So let's explore this evolving landscape and the promise/limits of AI-generated content via this new digital artifact!

But you might worry! How long will it take to read a book written by a digital mind trained on all the world's data!? We have worked hard on making it short and to the point. Imagine it as time well spent during 3 office commutes. Or the perfect excuse to sip several nice flat whites in your favorite cafe! You will become knowledgeable on MLOps in no time 😎

Get in touch

If you have comments and feedback or spot a hallucination or few, we'd love to hear from you 🙌

Chapter 1: Data Preparation for Machine Learning

Table of Contents

- 1.1 Data Discovery and Exploration

- 1.2 Data Quality and Structure

- 1.3 Data Cleaning and Transformation

- 1.4 Data Preparation Techniques

Chapter 1: Data Preparation for Machine Learning

1.1 Data Discovery and Exploration

1.1.1 Sources of Data

Criteria for selecting data sources

Identifying the right data sources for a machine learning project involves a thoughtful approach that starts with a clear understanding of your business objectives and the corresponding machine learning problem. The kind of data you need, and the sources that would be most relevant, depend heavily on what you're trying to achieve. For instance, if you're building a recommender system for an e-commerce platform, transactional data reflecting user buying behavior would be crucial. On the other hand, for a predictive maintenance model for manufacturing machinery, sensor data would be of paramount importance. As such, the first step is always to clearly articulate the problem you're trying to solve, the features you might need to solve it, and the data sources where these features could be found.

Overview of different potential data sources

Data sources can vary significantly across different sectors, companies, and problem domains. They could be internal or external, structured or unstructured, static or real-time, and may come in a variety of formats. Common internal data sources include databases and data lakes, which store structured data like transactional data or unstructured data like text, images, and more. External data sources could include APIs providing access to dynamic data like social media feeds or weather data, flat files for simpler, less volatile data, or real-time data streams for up-to-the-minute information. Each of these sources has its own strengths and weaknesses. For example, internal databases may offer rich, detailed data, but accessing and extracting the needed information might be challenging due to data governance and privacy policies. On the other hand, real-time data streams provide the most current data, but dealing with such data requires specialized tools and techniques to handle its velocity.

Formulating data acquisition strategy

Once you have identified potential data sources, the next step is to devise a data acquisition strategy. This strategy should outline how to access, retrieve, and ultimately integrate the data for your machine learning tasks. This involves defining the tools, technologies, and processes needed to extract data from the identified sources, ensuring the data is in a usable format, and deciding how frequently the data should be updated. For instance, if you're dealing with data from APIs, you would need to consider aspects such as rate limits, data paging, and handling API errors. In the case of real-time data streams, you might need to set up data pipelines that can ingest, process, and store streaming data effectively.

Challenges in data acquisition and integration

Lastly, it's important to anticipate potential challenges associated with data acquisition. Depending on the nature of the data sources, various obstacles might arise. Legal and ethical considerations, for example, may limit access to certain data or prescribe how it should be used. Technical limitations, such as system performance or bandwidth constraints, might affect data extraction processes. Integration issues could emerge when dealing with data from multiple sources, particularly if the data is in different formats or follows different schemas. Early identification of these challenges allows for the development of effective mitigation strategies, ensuring the data acquisition process runs smoothly and the resulting data is suitable for your machine learning project.

1.1.2 Data Formats

Introduction to data formats

Data is the raw material for any machine learning project, and it comes in a myriad of formats. Grasping the nature and characteristics of these formats is a crucial first step in data discovery and exploration. The format not only dictates how data can be used, but it also informs the choice of tools and strategies for its processing.

Structured data, such as CSV files or SQL databases, has a clearly defined structure with rows and columns, akin to a spreadsheet. This type of data is typically straightforward to process and analyze using standard data manipulation tools. For instance, consider CSV files. They can be readily loaded into a Pandas DataFrame in Python, a popular library that provides a flexible data manipulation framework. With Pandas, you can easily sort, filter, group, and transform your data. On the other hand, SQL databases have a tabular structure and are queried using SQL. SQL is a powerful language that allows for robust data retrieval operations, including joining tables, filtering records, and performing complex aggregations. However, it's important to note that extracting data from these sources often involves a deep understanding of the database schema and sometimes even the underlying business rules.

Impact of data format on preprocessing

Semi-structured data, such as JSON or XML, doesn't conform to the rigid table structure but still contains identifiable and extractable fields. This format often represents data from APIs or NoSQL databases. Unlike structured data, semi-structured data often requires additional preprocessing steps to transform the data into a tabular format. For instance, a JSON file may contain an array of nested objects, each representing a unique record. To process this data, you might need to "flatten" the nested structures into a single table, a step that can introduce complexity into your data processing pipeline.

Unstructured data, including text, images, and audio, poses its own unique challenges. This type of data doesn't conform to a predefined schema and often requires complex preprocessing steps to transform it into a usable format for machine learning models. For example, text data may need to be tokenized, lemmatized, and vectorized before it can be fed into a model. Similarly, image data might need resizing, grayscale conversion, or normalization, to mention a few. The preprocessing steps are not only format-dependent but also model-dependent, further complicating the process.

Moreover, there are advanced data formats like HDF5 or Parquet designed to handle large, complex datasets efficiently. These formats support complex data structures like multi-dimensional arrays or nested tables and are optimized for reading and writing large volumes of data. They're particularly useful in big data scenarios, where traditional data formats might prove inefficient or even infeasible.

In conclusion, understanding the intricacies of data formats is crucial for successful data discovery and exploration. The format affects every subsequent step in the data processing pipeline and can significantly impact the overall performance and feasibility of a machine learning project. It's thus imperative for ML practitioners to be well-versed in handling a wide range of data formats.

This is not a pipe(line) - Midjourney

This is not a pipe(line) - Midjourney

1.1.3 Data Size

Implications of dataset size

Data size is a fundamental characteristic that deeply influences the approach to data preprocessing and model selection in machine learning. It's often not the volume of data that presents a challenge, but rather the ability to process and analyze that data efficiently and effectively.

In the case of small datasets that can comfortably fit into a machine's memory, standard data processing libraries such as Pandas in Python are typically sufficient. The strategies employed might focus on maximizing the information extracted from the limited data, such as careful feature engineering, or using complex models that can capture intricate patterns. However, one must be cautious of overfitting, where a model becomes too complex and learns the noise in the data rather than the actual patterns.

When dealing with large datasets that exceed a machine's memory capacity, different approaches are required. Distributed computing frameworks like Apache Spark or Dask become essential, capable of processing large volumes of data across a cluster of machines, making it feasible to work with big data. However, working with large datasets introduces its own set of challenges, such as increased computational costs, longer processing times, and more complex data management. Moreover, the modeling strategies might shift towards simpler, more scalable models, or using techniques such as model ensembling or deep learning, which can handle large amounts of data effectively.

Impact of data size on model complexity and training time

It's worth noting that sometimes, data might exceed capacity due to a lack of specifying practical constraints on the data. For example, if a system is designed to accept open-ended text inputs without any character limit, it may lead to unmanageably large data points. Additionally, in the early stages of model development, it might not be necessary to use all of the data available. A subset of the data could be sufficient for initial modeling, thereby easing computational demands. However, in a production environment, there might be a need to process the entirety of the data, necessitating the need for efficient data management strategies. We will delve deeper into strategies for data sampling in a subsequent section.

1.1.4 Data Type

Differentiating between types of data

The type of data at hand is another pivotal factor that guides the preprocessing steps and informs the choice of machine learning algorithms. Broadly speaking, data can be classified into numerical, categorical, and text data, each with its own peculiarities and challenges.

Numerical data, such as quantities or measurements, are naturally suited for mathematical and statistical operations. Such data might need normalization or standardization to ensure that all features are on a comparable scale, especially when using algorithms sensitive to feature scales, such as k-nearest neighbors or support vector machines. Further, numerical data might contain outliers that need careful handling to avoid skewing the model's learning.

Categorical data, on the other hand, consists of discrete classes or categories. This type of data requires encoding before it can be used in machine learning models. Common encoding techniques include one-hot encoding or ordinal encoding. However, handling high cardinality categorical data, where a feature has many possible values, can be challenging and might require more sophisticated techniques such as target encoding or embeddings.

Text data, consisting of words or sentences, requires specialized preprocessing steps such as tokenization, stemming, and lemmatization, followed by vectorization to convert the processed text into a numerical format that can be fed into a machine learning model. Dealing with text data often involves natural language processing (NLP) techniques, and the choice of model can range from traditional methods such as Naive Bayes to more advanced techniques like transformers in deep learning.

In conclusion, understanding the type of data you're working with is crucial in determining the most appropriate preprocessing steps and selecting the best-suited machine learning models. It's crucial for ML practitioners to be proficient in handling different data types to successfully tackle diverse problems.

1.1.5 Data Exploration and Visualization Techniques

Importance of data exploration

Data exploration and visualization techniques are the critical first steps in understanding and interpreting the dataset at hand. These techniques are instrumental in the discovery phase of the machine learning process, helping uncover hidden patterns, detect anomalies, and identify relationships between variables. Beyond being crucial for data scientists and machine learning engineers, these methods are also indispensable for bridging the communication gap with stakeholders and domain experts.

Exploratory Data Analysis (EDA), a fundamental part of this stage, employs statistical techniques, often supplemented with visual methods, to understand the primary characteristics of a dataset. The use of summary statistics such as mean, median, and standard deviation gives an overview of the dataset's central tendency and spread. Calculating correlation coefficients between variables provides insights into their relationships, indicating whether they move together, which could be a sign of multicollinearity or potential feature importance.

Tools and techniques for data visualization

Visual representation methods such as histograms, scatter plots, box plots, and heat maps offer a visual interpretation of these statistical insights. A histogram, for instance, can provide a clear picture of a variable's distribution. Scatter plots, on the other hand, can reveal the relationship or lack thereof between two variables. Box plots can illustrate the spread and skewness of the data, while heat maps can help understand the correlation between multiple variables at a glance.

Beyond their utility in the exploration stage, these visualizations serve as powerful communication tools, especially when explaining complex data insights to non-technical stakeholders. A well-crafted visualization can convey the story behind the data, making it easier for stakeholders to understand and engage with the findings. This effective communication can prove crucial when discussing findings and soliciting feedback from domain experts, who, while not necessarily well-versed in data science, possess deep domain knowledge.

Identifying potential issues through data exploration

In the context of machine learning, EDA and visualization techniques provide valuable insights that inform subsequent steps in the process. Observations from EDA can guide the choice of models, the need for data transformations, and the potential for feature engineering. For instance, noticing high skewness in a variable could indicate the need for a transformation, such as log transformation, before its use in a model. Similarly, finding high correlation between variables could point to multicollinearity, which could affect the performance of certain models like linear regression.

Taking it a step further, EDA and visualization techniques can also facilitate interactions with subject matter experts (SMEs). These SMEs, though not technically inclined, can provide a wealth of domain-specific knowledge. By presenting them with clear visualizations and data-based findings, they can contribute to the machine learning process by providing insights that may not be immediately apparent from the data alone. This collaborative effort can lead to a richer understanding of the dataset and the problem at hand, ultimately improving the quality and accuracy of the machine learning solution.

In conclusion, data exploration and visualization techniques are fundamental to the machine learning process. They provide a means to understand the 'story' the data tells, facilitate effective communication with stakeholders and SMEs, and provide critical insights that guide the subsequent steps in the machine learning process. The ability to effectively explore and visualize data is, therefore, a critical skill for any data scientist or machine learning engineer.

1.2 Data Quality and Structure

1.2.1 Data Structure

Importance of structure in ML problem formulation

While data formats represent the "container" for data, the concept of data structure dives deeper into how individual elements within that container relate to one another. Understanding the data structure is vital for machine learning practitioners as it influences the data preparation, feature extraction, and the choice of the modeling algorithm.

For instance, if data is organized in a tabular structure, this implies a certain level of independence between rows (observations), with each column (variable) potentially offering different types of information. In this case, the focus often lies on ensuring data consistency, handling missing values, and dealing with potential outliers. Moreover, the tabular data structure implies the possibility to apply a wide range of machine learning algorithms, from logistic regression to complex ensemble methods.

However, data can also be structured in more complex ways. Hierarchical data, for example, has a tree-like structure where each data point, except the top one, is connected to exactly one parent. This type of structure is common in scenarios such as organizational charts or file systems, and it often requires specialized handling and specific types of models, such as tree-based methods.

Temporal data involves a time component, implying a specific order of data points. This structure is typical in time series analysis, where the sequence of observations matters significantly. Depending on the task at hand, traditional time series models like ARIMA, or more complex approaches like recurrent neural networks, might be more suitable.

Network data, on the other hand, involves relationships between entities represented as graphs. This structure arises in scenarios like social network analysis or web page ranking, and it calls for graph algorithms and network analysis techniques to extract meaningful patterns.

In conclusion, the understanding of data structure goes beyond recognizing its format. It involves grasping the inherent relationships among data elements, guiding the practitioner in making informed decisions regarding data preprocessing, feature extraction, and model selection. This understanding is a prerequisite for efficient and effective data handling in machine learning workflows.

1.2.2 Data Schema

Role of schema in data preprocessing

In the context of data preparation for machine learning, understanding the schema of your data set is a key aspect of the exploratory process. A data schema provides a detailed description of how data is organized within the dataset, including the relationships between different data elements, data types, and constraints.

Firstly, data schemas provide information about the data types of each field in the dataset. This includes whether a field contains numerical data, categorical data, text, or some other type of data. This information is crucial for determining the appropriate preprocessing steps and the selection of machine learning models. For example, categorical data might require one-hot encoding, while text data might require natural language processing techniques.

Secondly, data schemas can outline the relationships between different fields in the dataset. This can take the form of primary and foreign keys in relational databases, nested fields in semi-structured data like JSON, or edges in graph databases. Understanding these relationships can help in creating derived features, which might improve the performance of your machine learning models. It can also guide the process of data cleaning, as inconsistencies in these relationships often indicate data quality issues.

Thirdly, data schemas can specify constraints that data must adhere to. These might be explicit constraints like a field containing only positive numbers, or implicit constraints like a sales figure being unlikely to exceed a certain threshold. These constraints can be a valuable tool for identifying potential data errors.

Finally, a data schema can help facilitate communication about the data and its characteristics across different teams and stakeholders. It provides a standard language that can be used to discuss the dataset, its structure, and its potential issues.

In sum, understanding the data schema is a crucial aspect of data preparation. It provides critical insights into the data's structure, informs the preprocessing steps, and aids in the detection of potential data quality issues.

1.2.3 Data Quality

Importance of data quality in machine learning

Data quality is a cornerstone of any machine learning project and extends beyond mere technicalities. It encapsulates organizational, technical, and logistical challenges that require careful attention and persistent effort. High-quality data enhances the potential for machine learning models to yield reliable, precise, and meaningful results.

Data quality can be evaluated along several dimensions, including completeness, accuracy, and consistency. Completeness refers to the absence of missing values in the dataset, ensuring that all necessary data is present and usable. Accuracy involves checking whether the data accurately represents the real-world phenomena it is supposed to capture. Consistency, another critical aspect, refers to the alignment of data according to the defined schema and its uniformity across different data sources.

Data quality as a continuous process

However, it is crucial to understand that maintaining good data quality is not a one-time task. It requires a continuous and coordinated effort across the organization, involving not only the data engineering teams but also business stakeholders. This collaboration ensures that the necessary data elements for business context are accurately and consistently captured, thus enhancing the overall quality of data.

Data engineering teams play a crucial role in this process. They are tasked with designing and implementing processes that maintain the quality of data. This process involves understanding the business context, identifying the most important data elements, and creating systems to capture this data accurately.

Moreover, establishing robust data profiling and auditing practices is vital to ensure that data quality is maintained over time. Data profiling includes understanding the structure, content, and quality of the data, which can help identify any anomalies, errors, or inconsistencies. Regular data audits involve checking the data against predefined metrics and rules to ensure that it meets the required quality standards.

Strategies for improving data quality

Data quality requires many checks - Midjourney

Data quality requires many checks - Midjourney

Machine learning techniques can also be utilized in these auditing processes. Anomaly detection methods, for instance, can be used to identify data points that deviate significantly from the norm. These outliers might need further investigation or correction. Unsupervised learning techniques, such as clustering or autoencoders, are often employed for this purpose, offering an automated and scalable way to ensure data quality.

However, maintaining data quality is not just about technical solutions. It is just as much about overcoming organizational challenges. These challenges might include a lack of ownership or understanding of the importance of data quality, or insufficient resources dedicated to data quality initiatives. Addressing these challenges requires strong leadership, clear communication, and a cultural shift within the organization that places a high value on data quality.

In conclusion, data quality is a multifaceted issue that requires continuous effort, collaboration, and a mix of technical and organizational solutions. Ensuring high-quality data is critical for the success of machine learning projects, and despite the challenges involved, the rewards it brings in terms of improved model performance and reliability make it a worthwhile endeavor. By understanding and addressing the various aspects of data quality, organizations can build a solid foundation for their machine learning initiatives.

1.3 Data Cleaning and Transformation

1.3.1 Data Cleaning

Approaches for addressing errors and inconsistencies in data

Data cleaning is an indispensable component of the machine learning pipeline. This process entails the identification and rectification of errors and inconsistencies in datasets to optimize their overall quality. The complexity of this task often calls for a carefully calibrated blend of automation and manual inspection, guided by domain knowledge.

Data inconsistencies can arise from a myriad of sources. Incorrect data entries, a commonplace occurrence, can be a result of human error during data collection or transfer. Misspellings, another common issue, introduce uncertainty and inconsistency into the dataset. Discrepancies in data representation, such as dates recorded in different formats or inconsistent use of units, add another layer of complexity to the data cleaning process. Furthermore, inconsistent formats can cause headaches, particularly when integrating data from different sources. These problems, if not addressed, can distort data distributions, causing a biased learning process and, consequently, subpar predictive performance. To combat these issues, it's crucial to employ systematic methods. These might involve rules-based techniques, utilizing predefined rules based on domain knowledge to pinpoint anomalies, or statistical methods that identify outliers based on data distributions.

Techniques for dealing with missing data

Missing data is another critical aspect of data cleaning. There's an abundance of reasons why data can go missing - an unanswered question in a survey, a faulty sensor during data collection, or even data being lost in the shuffle during transmission or processing. The techniques for managing missing data are diverse, including deletion, imputation, and prediction. Deletion, which involves discarding data points or features with missing values, is the most straightforward approach. However, this method can lead to the loss of valuable information. Imputation, on the other hand, fills in missing values using statistical measures such as mean, median, or mode. More sophisticated methods might leverage machine learning techniques to predict missing values based on other data points. The technique of choice hinges on the nature and extent of missing data, as well as the specific demands of the machine learning task at hand.

Role of domain knowledge in data cleaning

Domain knowledge is pivotal in data cleaning. It informs what constitutes an error or inconsistency, potential causes of missing data, and the most effective techniques for rectification. Additionally, domain experts can offer guidance in the feature engineering and selection processes, which often occur simultaneously with data cleaning. Therefore, maintaining a productive collaboration between data scientists and domain experts is crucial for effective data cleaning.

Consider the case of a project aiming to predict hospital readmission rates to illustrate the significant impact of data cleaning on model performance. The model's initial performance left much to be desired. However, after rigorous data cleaning, which involved error rectification and imputation of missing values, the predictive performance saw a notable improvement. This case underscores the importance of data cleaning in machine learning projects. Despite the challenges and time investment it necessitates, effective data cleaning can substantially bolster the performance of machine learning models, resulting in more dependable and actionable insights.

1.3.2 Data Transformation

Need for data transformation

Data transformation is an essential part of the data preparation process, transforming raw data into a format that is more suitable for machine learning algorithms. The primary goal of this step is to enhance the predictive power of the models by creating a data structure that allows the algorithms to detect patterns more effectively.

Understanding the need for data transformation begins with the realization that different types of data require different types of treatment. Numerical data, for example, often benefits from standardization or normalization. Standardization rescales data to have a mean of 0 and standard deviation of 1, ensuring all variables operate on the same scale. This is vital for algorithms like k-nearest neighbors (KNN) or support vector machines (SVM), which are sensitive to the scale of input features.

Transformation techniques and their impact

Normalization, on the other hand, scales features to a range, often between 0 and 1, which can be advantageous when the data follows a Gaussian distribution but the standard deviation varies. This is done using the formula y = (x - min) / (max - min), where min and max are the minimum and maximum values in the feature column respectively.

Binning is another transformation technique often applied to continuous data to convert it into discrete 'bins'. This can be done using various strategies: fixed-width binning, where the data range is divided into fixed-width intervals; adaptive binning, where the data range is divided based on data distribution, typically using quartiles; and cluster-based binning, where clustering algorithms like k-means are used to create bins.

Encoding is a technique used to transform categorical data into a format that machine learning algorithms can digest. One-hot encoding creates new binary columns for each category, with 1s and 0s indicating the presence or absence of the category. Ordinal encoding converts each category to a unique integer, which can be used when there's an inherent order in the categories. However, it can introduce a notion of false proximity between categories.

The impact of these transformation techniques on model performance is significant. An unscaled dataset might lead to a feature with a broader range of values dominating the model training, causing the model to underperform. Inappropriate encoding might introduce unintended order in the categories, leading to skewed predictions.

Selection of appropriate transformation techniques

Choosing the correct transformation technique requires understanding the data type and model requirements. Tree-based models can handle different types of data and variable scales, requiring less data transformation. On the contrary, linear and distance-based models often need extensive data transformations.

Automated tools for data transformation

Automated machine learning (AutoML) tools can automate the data transformation process. These tools can analyze the data and apply suitable transformations. However, they may not make the best decisions for complex or unusual datasets, and the lack of transparency can lead to unexpected results. A solid understanding of data transformation techniques remains a key skill for machine learning practitioners despite the availability of these tools.

1.3.3 Dealing with Messy, Real-world data

Importance of and techniques for handling missing data

Real-world data is often messy, with missing values and outliers being common issues that must be tackled effectively to build robust machine learning models. Both these issues can significantly impact the performance of these models, making their handling a critical part of the data preparation process.

Missing values disrupt the distribution of variables, potentially leading to biased or incorrect results if not appropriately addressed. Several techniques have been developed to handle missing data. Listwise and pairwise deletion are basic techniques for handling missing data, but they may not always be suitable due to the potential impact on sample size and comparability of analyses.

Imputation techniques, such as mean imputation and regression imputation, replace missing values with estimated ones. Mean imputation uses the mean of the observed values, but this approach can lead to an underestimation of the variance and does not account for the correlation between variables. Regression imputation predicts missing values based on other variables, but it can create artificially perfect relationships when the imputed variable is used as a predictor. Multiple imputation, an advanced technique, generates several completed datasets and combines the results, providing a robust solution that accounts for the uncertainty of missing data.

Understanding outliers and their impact

Outliers, data points that significantly deviate from other observations, can skew statistical measures and disrupt model performance. Identifying outliers is the first step towards dealing with them, and this can be achieved using statistical methods, distance-based methods, or density-based methods.

Handling outliers is a nuanced task that often requires domain expertise. If outliers result from errors in data collection or processing, deletion might be appropriate. However, if outliers indicate critical structural deviations in the data or rare but important events, other strategies should be considered. These include transformation methods, such as scaling or logarithmic transformation, that reduce the impact of outliers, and binning methods that categorize data into different buckets, making the model less sensitive to outliers.

Dealing with missing values and outliers is integral to the data preparation process for machine learning. Appropriately handling these issues greatly improves the quality of data and leads to more reliable and accurate machine learning models.

1.4 Data Preparation Techniques

1.4.1 Data Sampling

Importance of data sampling

Data sampling is a strategic method used in data preparation to create manageable yet representative subsets of data. It's a practical approach that aids in handling large volumes of data efficiently, reducing computational resources and time, especially during the initial stages of model development. Furthermore, it supports exploratory data analysis and initial model testing, providing a representative snapshot of the dataset without overwhelming resources.

There are several sampling techniques that a data practitioner can employ, each suited to different scenarios. Simple random sampling is perhaps the most straightforward: it involves selecting data points from the dataset randomly, with each having an equal chance of being picked. This method is quick and easy but may not be representative if the data has implicit structures or imbalances.

Different sampling techniques

Stratified sampling, on the other hand, divides the entire dataset into distinct groups or strata based on specific attributes, such as class labels in classification problems, and then selects samples from each stratum. This approach ensures that the sample maintains the original data's proportions and structures, resulting in better representation, particularly when dealing with skewed data.

Cluster sampling is another technique where the dataset is divided into clusters based on some inherent characteristics, and then clusters are randomly selected, with all data points within the selected clusters forming the sample. This method is effective when data naturally form groups or when data collection is naturally clustered, like geographical data.

Addressing imbalanced datasets presents a unique challenge. Techniques like oversampling and undersampling are often employed to balance class representation. In oversampling, copies of the minority class are randomly selected and added to the dataset to balance the classes. However, this can lead to overfitting, as the model might simply memorize these repeated instances rather than learn the general patterns.

Undersampling, on the other hand, involves reducing the instances of the majority class. This technique can improve computational efficiency and balance the class representation, but it might result in the loss of potentially important data. Additionally, both oversampling and undersampling alter the original distribution of the target, potentially creating a model that is unrepresentative of the reality of the problem.

Impact of sampling on model performance

Each of these sampling techniques comes with trade-offs. While stratified sampling ensures better representation, it might be more complex to implement. Cluster sampling can lead to loss of information if intra-cluster variability is high. Understanding these trade-offs is crucial in selecting the appropriate sampling technique for a given data problem.

The selection of sampling techniques has a notable impact on model performance and generalization. Inappropriate sampling can lead to a model that performs well on the sample but fails to generalize to unseen data, leading to poor model performance in production. Therefore, understanding and appropriately applying data sampling techniques is a critical step in the data preparation process, one that can significantly influence the success of machine learning initiatives.

1.4.2 Data Splitting

Techniques for data splitting

Data splitting is a fundamental aspect of data preparation for machine learning that aids in building robust and generalizable models. The primary purpose is to assess the model's ability to perform well on unseen data, which is indicative of its real-world applicability. The process involves partitioning the dataset into three sets: training, validation, and test. The training set is used to train the model, the validation set is used to tune hyperparameters and make decisions during the model development process, and the test set is used to evaluate the model's final performance.

The triple splitting strategy is especially important in ensuring the model's robustness and reliability. By setting aside a test set that the model never sees during training, we create a "holdout" dataset. This holdout dataset allows us to estimate how the model would perform on completely new, unseen data. It's important to clarify that the term 'holdout' can sometimes cause confusion, as it is also used to refer to the validation set. In the context of data splitting, however, the holdout is the final test set, completely unseen during the training and validation phases. This strategy helps us avoid 'data leakage,' where information from the test set inadvertently influences the training process, leading to overly optimistic performance estimates.

Various techniques are used for data splitting. The simplest approach is the random split, which randomly assigns data points to the training, validation, or test set. While this technique is straightforward and widely used, it can lead to biased splits if the dataset has imbalanced classes or time-dependent features. The stratified split technique can be used to maintain the same distribution of classes in each split as in the original dataset, making it especially useful when dealing with imbalanced data. The time-based split is crucial when working with time-series data where the chronological order of data points matters.

Importance of cross-validation

Cross-validation is another key technique associated with data splitting. It provides a robust estimate of the model's performance and helps prevent overfitting. In k-fold cross-validation, the training data is split into 'k' subsets. The model is trained on 'k-1' subsets and validated on the remaining subset. This process is repeated 'k' times, each time with a different validation subset. The performance of the model is then averaged over the 'k' trials, providing a less biased estimate of the model's ability to generalize.

Influence of data splitting strategy on model selection and tuning

The strategy for data splitting has a substantial influence on model selection and tuning. Inappropriate splitting may lead to optimistic or pessimistic estimates of model performance, leading to incorrect decisions about which model to select or how to tune it. Therefore, understanding the nature of the data and the appropriate splitting technique is essential in the model development process. Careful data splitting ensures a fair and unbiased assessment of the model and aids in building models that are truly ready for real-world deployment.

1.4.3 Feature Engineering

Introduction to feature engineering

Feature engineering is a crucial step in the data preparation process that involves creating meaningful input variables or features to enhance the performance of machine learning models. It's an art as much as it is a science, requiring domain knowledge, creativity, and a deep understanding of the machine learning algorithms used. Effective feature engineering helps models uncover complex patterns, improves model interpretability, and reduces computational requirements by decreasing the dimensionality of the data.

Several techniques for feature engineering can be employed by data practitioners. Binning is one such method that transforms numerical variables into categorical ones by grouping a set of numerical values into bins. This can handle outliers and reveal relationships that aren't apparent in the raw, numerical data. Creating polynomial features, particularly useful for linear models, helps capture relationships between features that aren't merely linear. Interaction features capture the effect of one feature on another. They're created by combining two or more features, often through simple operations like addition, subtraction, multiplication, or division.

The complexity of dealing with data types like text and images necessitates specialized feature extraction techniques. Methods such as Natural Language Processing (NLP) for text data, and convolutional neural networks (CNNs) for image data, transform these unstructured data types into a structured format that machine learning models can understand and learn from.

Importance of domain knowledge in feature engineering

A key aspect of feature engineering is the application of domain knowledge. This understanding of the context of the problem can guide the creation and transformation of features, leading to more robust and interpretable models. One significant manifestation of domain knowledge is feature enrichment, a process that involves supplementing the dataset with additional, relevant information. For instance, in predicting house prices, meteorological data could be integrated to account for how weather patterns might influence property values. Similarly, a time-series model predicting stock prices could benefit from integrating relevant economic indicators. Feature enrichment, powered by domain expertise, not only provides valuable input to the model but also significantly improves its performance.

Automated feature engineering tools

With the advancement in ML technologies, automated feature engineering tools are now available. These tools, capable of generating and testing a large number of features, are especially beneficial when dealing with high-dimensional data. However, their use comes with caveats. These tools may not always consider the unique characteristics of the data and the specific business context. Additionally, the features they create may lack the interpretability that comes with carefully handcrafted features.

To summarize, feature engineering is a powerful tool in the machine learning toolbox. It bridges the gap between raw data and models, making the data more suitable for modeling. Although it requires effort and expertise, successful feature engineering can significantly enhance model performance and interpretability.

1.4.4 Feature Selection

Techniques for feature selection

Feature selection plays a pivotal role in machine learning, influencing both model performance and interpretability. It involves identifying and selecting the most relevant features (input variables) for use in model construction. Feature selection techniques can aid in reducing overfitting, improving model accuracy, and reducing training time. Moreover, by eliminating irrelevant or redundant features, we can simplify our models, making them easier to interpret and explain.

There are several techniques for feature selection, each with its own advantages and disadvantages. Filter methods, for instance, evaluate each feature's relevance by looking at the statistical properties of the data. Techniques such as Chi-square test, information gain, and correlation coefficient fall under this category. Filter methods are generally fast and scalable, but they do not take into account the potential interactions between features.

Wrapper methods, on the other hand, evaluate subsets of variables to determine their effectiveness in improving model performance. These methods, such as recursive feature elimination (RFE), genetic algorithms, or forward and backward elimination, create multiple models with different subsets of features and select the subset that delivers the best performance. However, they can be computationally expensive, especially with high-dimensional data.

Embedded methods integrate feature selection into the model training process. Techniques such as LASSO and Ridge regression, or tree-based methods like Random Forests and Gradient Boosting, incorporate feature selection as part of their learning. These methods can capture complex interactions between features and are usually more efficient than wrapper methods, but they may be more challenging to interpret.

Dimensionality reduction techniques

While feature selection focuses on choosing the most relevant features, dimensionality reduction seeks to create a new set of features that capture the essential information in the original data. Techniques such as Principal Component Analysis (PCA), t-Distributed Stochastic Neighbor Embedding (t-SNE), Uniform Manifold Approximation and Projection (UMAP), and autoencoders are commonly used for this purpose. These techniques can be highly beneficial when dealing with high-dimensional data, where visualization is challenging, and computational resources are stretched.

Feature selection and dimensionality reduction can significantly impact model performance. For instance, a well-chosen subset of features can lead to models that are both accurate and interpretable. Conversely, an inappropriate selection can lead to models that perform poorly on unseen data due to overfitting. There are numerous case studies where careful feature selection and dimensionality reduction have dramatically improved the performance of models, underscoring the importance of these techniques.

Role of feature selection in model interpretability and efficiency

Finally, feature selection also plays a crucial role in enhancing model interpretability and computational efficiency. By reducing the number of features, we decrease the complexity of the model, making it easier to understand and explain. This is particularly crucial in industries where model interpretability is a requirement. Moreover, fewer features mean less computational resources are needed for training and prediction, which can be a significant advantage in large-scale applications. Consequently, feature selection serves as an essential step in the data preparation process, paving the way for efficient and interpretable machine learning models.

1.4.5 Synthetic Data Generation

Understanding synthetic data

Synthetic data generation is a process that creates data designed to mimic the characteristics of real-world data but is entirely artificial. This technique is increasingly relevant in the field of machine learning and data science, providing a valuable tool in scenarios where obtaining real-world data is challenging, sensitive, or costly. It helps overcome difficulties related to data scarcity, privacy concerns, and imbalanced data distribution.

Several techniques are commonly used for synthetic data generation, each with its unique benefits and suitable for specific use-cases. One of the techniques is the Synthetic Minority Over-sampling Technique (SMOTE), which is used to tackle problems of class imbalance. Class imbalance is a common issue in real-world datasets, where one class of data significantly outnumbers the other(s). SMOTE works by creating synthetic samples from the minor class instead of creating copies, which helps to increase the representation of minority classes in the dataset.

For more complex scenarios where the goal is to generate high-dimensional data that captures the underlying distribution of the real-world data, Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) come into play. GANs, in particular, have been instrumental in generating synthetic data that is almost indistinguishable from real data. These generative models learn to capture the intricate correlations and variability in real-world data, thus generating synthetic data that retains the complexity and richness of the original dataset.

Legal and ethical considerations in synthetic data generation

Despite its advantages, synthetic data generation is not a panacea. It's worth noting that synthetic data, being derived from real-world data, often contains systemic biases inherent in the source data. Such biases are often the product of historical and social forces. For instance, synthetic data generated from historical hiring data might unintentionally reflect past discriminatory practices. These biases can be perpetuated in the synthetic data and subsequently in the machine learning models trained on it, leading to potentially unfair and unethical outcomes.

Furthermore, biases can be introduced during the synthetic data generation process itself. The choice of the features to include, their distributions, and the relationships between them can inadvertently favor certain demographic groups over others, leading to models that perform poorly for underrepresented demographics. It emphasizes the need for careful scrutiny of the synthetic data generation process to ensure that it does not introduce or amplify biases.

Legal and ethical considerations play a crucial role when generating and using synthetic data. Data scientists must stay aware of the evolving landscape of laws and regulations around data privacy and ethical guidelines on the use of AI. Synthetic data should be generated and used in ways that comply with these rules to protect the privacy of individuals and to ensure ethical usage of machine learning models.

Illustrating the use of synthetic data

Let's illustrate the use of synthetic data with a case study. Suppose a company wants to develop a machine learning model to predict customer behavior but lacks sufficient real-world data. Here, synthetic data can be generated to mimic actual customer behavior, allowing the company to train a model that performs well in real-world scenarios. Such use of synthetic data can significantly enhance the model's performance and generalizability while ensuring customer privacy.

In conclusion, synthetic data generation is a potent tool that can augment datasets and aid in model development and testing. However, its usage requires careful handling to avoid perpetuating biases and to comply with legal and ethical guidelines. Regular audits of synthetic data and the machine learning models trained on it can help identify and mitigate biases. Incorporating diverse teams and perspectives in the development and review process can contribute to creating fairer, more robust, and reliable machine learning models. Synthetic data generation, when used thoughtfully, can significantly advance the field of machine learning, opening new possibilities for innovation and growth.

Chapter 2: Building or Reusing Machine Learning Models

Table of Contents

- 2.1 Model Development

- 2.2 Model Evaluation

- 2.3 Model Versioning and Lineage

Chapter 2: Building or Reusing Machine Learning Models

2.1 Model Development

2.1.1 Choosing Among Types of Models and Model Training

Overview of model architectures

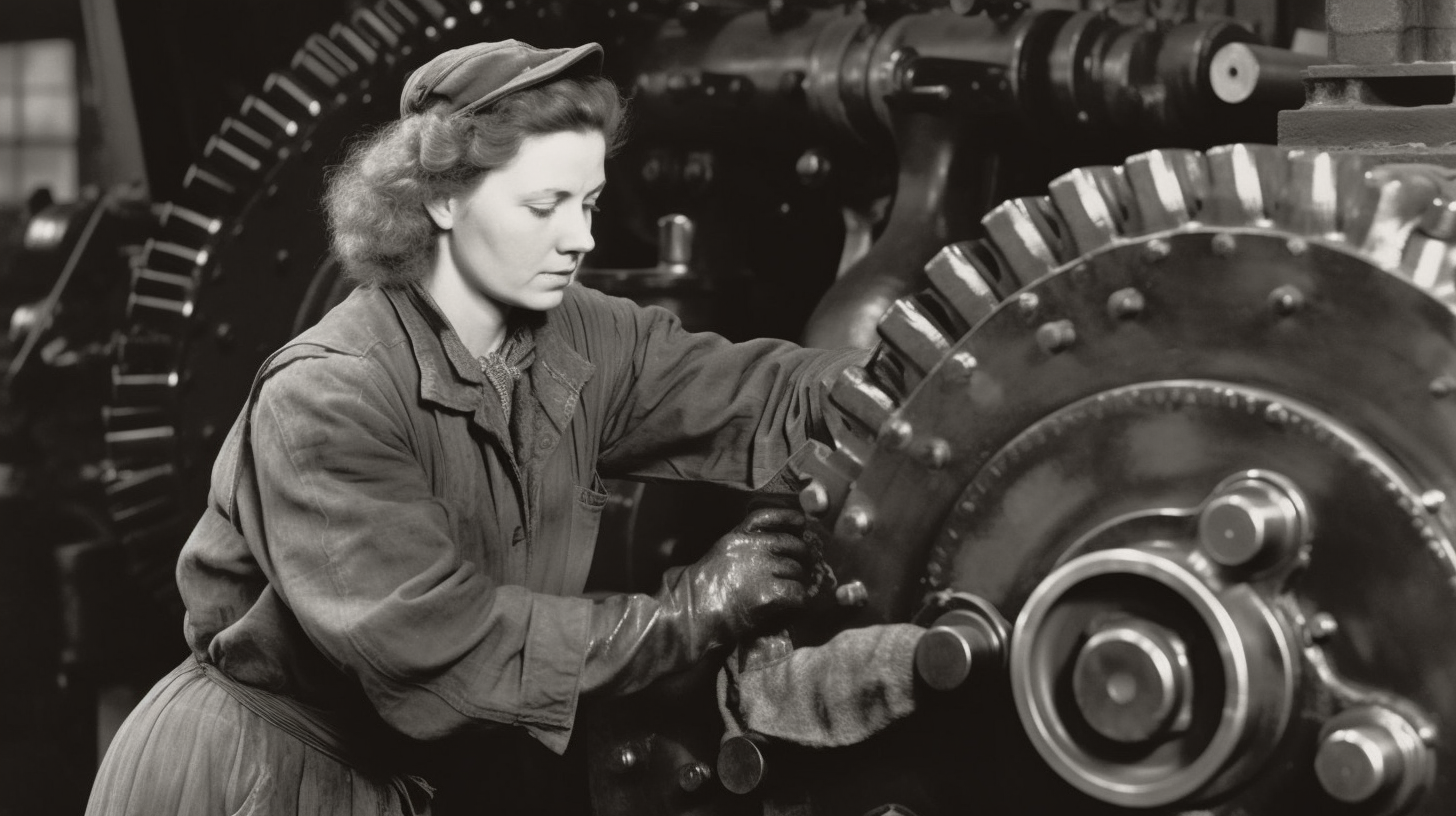

A machine can learn - Midjourney

A machine can learn - Midjourney

At the heart of every machine learning application is a model—a construct designed to learn patterns in data and make predictions or decisions. The model's architecture, training process, and final performance are closely tied to the nature of the problem we're trying to solve. This section will delve into different types of machine learning approaches, model architectures, parameters, and training techniques.

Before we discuss model architectures, we must understand the problem we're trying to solve. Machine learning problems are typically classified into supervised learning, unsupervised learning, and semi-supervised learning.

Different types of machine learning approaches

Supervised learning is a common machine learning task where each example in our training dataset is associated with a specific output value or label. Predicting house prices based on a set of features (like the number of bedrooms or the neighborhood) is a typical supervised learning problem. The model is trained to predict a continuous value (the price), making it a regression task.

Unsupervised learning, in contrast, works with datasets that don't have a specific output label. These models are used to discover hidden patterns and relationships in the data. Clustering customers based on purchasing behavior, for instance, is an unsupervised learning task. The model has to identify groups or clusters of customers with similar buying patterns.

Semi-supervised learning falls between the two. It involves training models on a dataset where some examples have labels, but others don't. This approach is often useful when collecting and labeling data is costly or time-consuming.

After identifying the type of learning task, we move onto choosing a model architecture. This process is akin to selecting the right tool for the job. Different architectures have unique characteristics and are best suited to certain kinds of problems.

Linear models, like linear regression and logistic regression, are fundamental tools in the data scientist's toolkit. They're easy to understand and interpret and often work well for relatively simple tasks or as a baseline for more complex models.

Tree-based models, such as decision trees, random forests, and gradient boosting machines, partition the data into distinct groups or classes based on certain conditions. They are powerful non-linear models and are popular for both classification and regression tasks.

Neural networks, the foundation of deep learning, consist of interconnected layers of neurons or nodes. They are particularly good at learning from high-dimensional data and have seen tremendous success in areas like image recognition, natural language processing, and more.

Ensemble models combine predictions from multiple models to generate a final prediction. The goal is to leverage the strengths of each individual model to improve overall performance and reduce the likelihood of a poor prediction.

Role of model parameters

Once we've chosen a model architecture, we need to understand the role of model parameters. Parameters are the parts of the model that are learned from the data during the training process. For instance, in a linear regression model, the parameters are the slope and intercept of the line. They're determined by the data and the learning algorithm.

Understanding how models are trained is fundamental to successful machine learning. The training process involves iteratively adjusting the model's parameters to minimize the difference between the model's predictions and the actual values. This is often achieved using optimization algorithms like gradient descent, which aim to find the parameters that result in the smallest prediction error.

In supervised learning, the model learns from labeled examples. In contrast, unsupervised learning involves training models to find patterns in unlabeled data, such as grouping similar examples together. Semi-supervised learning is a hybrid approach, using a mix of both labeled and unlabeled data to train models. This is particularly useful when labels are costly or difficult to obtain.

Model training is a crucial step in machine learning, but it's also a complex one. We need to choose the right architecture, understand the role of model parameters, and apply the appropriate training techniques. But when we've matched the right model with the right problem, and trained it effectively, we can build machine learning systems that deliver impressive results.

2.1.2 Model Tuning: Fine-tuning Parameters and Hyperparameter Tuning

The process of fine-tuning model parameters

Baking & machine learning are more similar than you think - Midjourney

Baking & machine learning are more similar than you think - Midjourney

Machine learning model tuning is akin to the careful adjustments made while baking. It involves two key areas: fine-tuning model parameters and hyperparameter tuning.

Model parameters are intrinsic properties of the model, comparable to the texture and moisture level of a cake that are developed during the baking process. These parameters are learned from the training data. The "fine-tuning" of these parameters is an automatic process conducted by the learning algorithm as it learns from the data, minimizing the model's prediction error—like getting the perfect cake texture by letting the cake bake and checking periodically.

Differentiating between model parameters and hyperparameters

Hyperparameters, on the other hand, are preset conditions that are decided before the baking (or training) begins. They are like the oven temperature or baking time that the baker sets based on prior knowledge. Hyperparameter tuning involves finding the best combination of these preset conditions to produce the most delicious cake or, in our case, improve the model's performance.

This tuning process is akin to a search problem. We have a set of oven temperatures and baking times, and we need to find the combination that results in the best cake. Similarly, with a set of hyperparameters, we seek the combination that results in the best model performance. Techniques for hyperparameter tuning include grid search, random search, and Bayesian optimization, each with their pros and cons, and the choice among them depends on factors like the complexity of the cake (model), available baking time (computational resources), and specific requirements of the cake recipe (problem at hand).

2.1.3 Model Validation: Techniques for Ensuring Generalization

Importance of model validation

Validation in machine learning is like the final quality check for a dish—it ensures that the model, like the dish, is ready to serve. Just as a perfect recipe doesn't cause the cake to be too dry (underfitting) or too moist (overfitting), effective model validation ensures a balance between underfitting and overfitting. Overfitting refers to a model that has learned the training data too well, including its noise and outliers, making it perform poorly on new data. Underfitting is when the model is too simple to capture the underlying patterns in the data, leading to poor performance on both the training and validation data.

Introduction of validation techniques

Techniques like hold-out validation and cross-validation are used to ensure this balance. Hold-out validation is similar to setting aside a portion of a dish to taste and evaluate. However, the effectiveness of this technique can vary depending on how the data is divided.

On the other hand, cross-validation, especially k-fold cross-validation, is more like tasting a dish at various stages of cooking. The model is trained and tested several times, each time on a different subset of the data. Though more reliable than hold-out validation, this method is also more computationally intensive, much like the time and attention required to taste and adjust a dish at different stages.

Different metrics for evaluating model performance

Lastly, to measure the model's performance, various metrics are used, like a chef uses different criteria to judge a dish. The chosen metrics depend on the problem type, such as accuracy, precision, recall, and F1 score for classification problems, or Mean Squared Error (MSE), Root Mean Squared Error (RMSE), or Mean Absolute Error (MAE) for regression problems. Ultimately, model validation aims to ensure our model can effectively solve the problem it was designed for—like ensuring a dish pleases the taste buds of those it is served to.

2.1.4 Model Reuse and Using Pre-trained Models

Concept of model reuse

The process of creating a machine learning model from scratch, much like baking a cake from base ingredients, is an involved, often time-consuming endeavor. Fortunately, much like we can save time by using a pre-made cake mix, we can expedite the development process in machine learning by using pre-trained models or applying model reuse. This strategy is about leveraging the effort already expended in training models on large datasets to fast-track our development process and potentially enhance model performance.

Model reuse involves leveraging previously developed models for new tasks. This can take the form of using pre-trained models or applying a method known as transfer learning. Pre-trained models are machine learning models that have been trained on extensive datasets and are often made available by large tech companies or research institutions. They can be very useful in speeding up development, as they save us from starting the model building process from scratch.

Trade-offs between building models from scratch and using pre-built models

Just like using a pre-made cake mix for baking, there are several considerations when using pre-trained models. These include ensuring compatibility with the task at hand, assessing the complexity of the pre-built model, and evaluating its performance on similar tasks. While reusing models can speed up development, it doesn't afford the same level of customization as building models from scratch.

Use of pre-trained models in transfer learning

Transfer learning, an advanced form of model reuse, uses a pre-trained model on a new problem. It's particularly effective when the data for your problem is similar to the data the model was initially trained on. Using pre-trained models through transfer learning provides several advantages: faster development, improved performance, and lesser computational resources. However, it also has limitations, such as the requirement of similar data distribution and the risk of negative transfer where the pre-trained model could potentially worsen performance if the tasks are too dissimilar.

Techniques for adapting and fine-tuning pre-trained models

In the world of machine learning, several popular pre-trained models are widely used in different domains. In the field of computer vision, models like VGG and ResNet have revolutionized image classification and object detection. These models were trained on massive image datasets like ImageNet and have shown exceptional performance in their tasks.

In the domain of natural language processing, the advent of models like BERT and GPT has brought significant advancements. BERT, introduced by Google, was pre-trained on a large corpus of text and has demonstrated remarkable effectiveness in understanding the context of language. Following BERT, OpenAI introduced the GPT series. These models, with their exponentially increasing scale, made headlines for their capabilities in language generation tasks.

However, it's important to keep in mind that the landscape of pre-trained models is continually evolving, and newer models keep emerging with enhanced capabilities. Remember, the key is to choose the pre-trained model that best suits the task at hand and aligns with your data distribution.

To wrap up, model reuse and using pre-trained models is akin to using a pre-made cake mix. It's a strategy that can significantly speed up the development process and improve model performance. But just as you need to pick the right cake mix for your baking endeavor, you also need to carefully select the right pre-trained model for your machine learning task.

2.2 Model Evaluation

2.2.1 Model Evaluation: Metrics for Assessing Performance

Importance of assessing machine learning models' performance

When creating machine learning models, we're essentially setting out to solve a problem. But creating the model is just the beginning. After that, we need to figure out how well the model is doing its job. Much like tasting a dish while cooking, model evaluation allows us to check on the progress of our model, making sure it's on track to becoming something useful and effective.

In the machine learning world, we use evaluation metrics to measure our models' performance. There's a wealth of different metrics out there, each with their specific purposes and interpretations. The right one to use can heavily depend on your specific task and the real-world context. Let's look at some commonly used ones.

Commonly used evaluation metrics

Sweet watermelon competition - Midjourney

Sweet watermelon competition - Midjourney

Imagine you've just built a machine learning model to predict whether a watermelon will be sweet before you cut it open. In this case, accuracy, which measures the proportion of total predictions that were correct, could be a helpful metric. But what happens if you have a dataset where 90% of the watermelons are sweet? A model that blindly labels every watermelon as sweet will have a high accuracy of 90%, but it won't be useful in picking out those few non-sweet watermelons.

That's where precision and recall come in. Precision and recall are particularly useful when one class is much more prevalent, or when we care more about one class over the other. In the context of our watermelon prediction task, precision would be the proportion of watermelons that our model correctly identified as sweet out of all the watermelons it predicted as sweet. It's answering the question: "When the model said the watermelon was sweet, how often was it correct?"

On the other hand, recall in our watermelon scenario would be the proportion of watermelons that the model correctly identified as sweet out of all the actual sweet watermelons. It answers: "How many of the actual sweet watermelons did the model manage to catch?"

The F1 score comes in handy when you want a balance between precision and recall. The F1 score is essentially a weighted average of precision and recall. Think of it as hosting a big watermelon tasting event where both serving a bitter watermelon and wrongly labeling a sweet one could harm your reputation. You'd aim for a model with a high F1 score.

In binary classification tasks, we often have to decide on a threshold for classifying observations based on the probabilities predicted by the model. The Area Under the Receiver Operating Characteristic Curve, or AUC-ROC, measures the model's ability to correctly classify observations as positive or negative across all possible thresholds, providing a comprehensive evaluation of the model's performance. Thus, the AUC gives us a single measure of how our model performs across all possible classification thresholds, ranking its ability to distinguish between sweet and non-sweet watermelons.

Significance of the choice of metric

But it's not just about understanding these metrics; it's about aligning them with the real-world context of the problem you're trying to solve. If you're only interested in accuracy and use that to build a model that predicts all watermelons as sweet, you're going to be in for a lot of bitter surprises. On the other hand, a model with a high recall might ensure that you catch all the sweet watermelons, but at the cost of potentially mislabeling and serving a lot of non-sweet ones.

Choosing the right metric, or set of metrics, for your problem ensures that the model’s performance aligns with the real-world impact you want it to have. They help guide you as you steer your models towards producing meaningful outcomes in the real world. And they serve as a reminder that what lies at the heart of machine learning isn't just algorithms and data, but the real-life effects these models have on our decisions and day-to-day lives.

2.2.2 Model Comparison and Selection

Importance of comparing different models' performance

Training a machine learning model is a crucial phase in the process, but it's not the final destination. An equally critical step is model comparison and selection, where different models are evaluated to determine which one best suits the task at hand.

This model selection process is akin to choosing the right tool for a particular job. After training various models, each might exhibit unique strengths and weaknesses. These can be evaluated using metrics such as accuracy, precision, recall, F1 score, or AUC. However, relying solely on these metrics could result in a choice that is more luck than judgment. Statistical techniques like the paired t-test or ANOVA can be employed to determine whether performance differences between models, such as a decision tree and a neural network, are genuinely significant or just a result of random variation in the test data.

Trade-offs between model complexity and performance

Cross-validation emerges as a powerful tool in both hyperparameter tuning and model selection. This technique aids in ensuring that our models do not merely fit the training data well, but can also generalize effectively to new, unseen data. Crucially, when different models are trained, each with its own strengths and weaknesses, cross-validation helps provide a more reliable estimate of how each model might perform on unseen data. This estimation is more reliable than a single training/test split, reducing the risk of the estimate being influenced by a specific partitioning of the data. Consequently, cross-validation is especially valuable when deliberating the trade-offs between model complexity and performance.

Simple models may be quick and easy to interpret but risk oversimplifying the problem and missing significant patterns. On the flip side, complex models, like deep neural networks, might fit the training data exceedingly well but potentially overgeneralize, fitting the noise instead of the underlying patterns and leading to overfitting. Balancing this bias-variance trade-off is a crucial consideration during model comparison.

Techniques for model selection

Efficient model selection techniques can help strike a balance between performance and complexity. Grid search, an exhaustive approach to exploring a manually specified subset of the hyperparameter space, is a traditional method but can be computationally expensive with larger datasets or complex models. An alternative is random search, which samples hyperparameters at random for a fixed number of iterations, providing a more efficient approach for high-dimensional hyperparameter spaces. Bayesian optimization, on the other hand, uses the objective function to select the most promising hyperparameters to evaluate, ensuring a thoughtful balance in the search space.

Evaluating model performance across different evaluation metrics

Model comparison and selection are not just about picking the model with the highest accuracy or the lowest error. The process requires careful consideration of the problem's nature, the relevance of different metrics, available computational resources, and the fine balance between performance and model complexity. The selected model should align well with the problem's nature, objectives, and constraints, and it should perform well not just on the training data but on unseen data as well. Much like a mechanic wouldn't choose a tool simply because it's the newest or most expensive, the best model isn't always the most complex or the one that performs best on the training set.

2.2.3 Transfer Learning: Leveraging Existing Models

Models can transfer their learning - Midjourney

Models can transfer their learning - Midjourney

Overview of transfer learning

Choosing the best model for a task isn't always about selecting between freshly trained algorithms. Sometimes, the key to optimal performance is to use, or "transfer," the knowledge already acquired by an existing model to a new related task. This method, known as transfer learning, can significantly boost a model's performance and provide substantial efficiency gains, especially when dealing with high-dimensional data like images or text.

So, how does transfer learning work? In essence, it capitalizes on the idea that general features learned for one task could be useful for another. To draw a parallel, if you are an expert in French, you could leverage that knowledge to learn Spanish more quickly. Similar principles apply in machine learning. For example, a model trained to identify dogs in images may already know a lot about recognizing general features such as edges, shapes, or textures. When given a new but related task, like identifying cats, the model doesn't need to learn these features from scratch. It can "transfer" this knowledge, thus reducing the training time and potentially improving performance.

Use of pre-trained models

Transfer learning is particularly beneficial when the new task has limited training data. Training a complex model like a deep neural network from scratch requires a lot of data. Without sufficient data, the model may overfit, learning the training data too well and failing to generalize to new examples. By using a pre-trained model as a starting point, transfer learning can mitigate this risk.

The benefits of transfer learning come into full bloom when the new task is similar to the task the original model was trained on. For instance, if the pre-trained model was trained on a large dataset of general images (like ImageNet), it may perform well on a variety of image recognition tasks. Likewise, a model trained on a broad text corpus might excel in various natural language processing tasks.

Successful applications of transfer learning

In practice, there are several ways to apply transfer learning. One common approach is to use a pre-trained model as a feature extractor. Here, the initial layers of the model, which have learned general features, are kept fixed while the final layers are retrained on the new task. Another approach is to fine-tune the entire model on the new task, adjusting the pre-trained weights slightly to optimize performance.

Both computer vision and natural language processing have seen successful applications of transfer learning. For instance, pre-trained models like VGG16, Inception, or ResNet, trained on millions of images, have been used effectively as base models for various image recognition tasks, from diagnosing diseases in medical imaging to identifying objects in autonomous vehicles. Similarly, in natural language processing, models like BERT, GPT, or ELMo, pre-trained on large text corpora, have shown substantial performance improvements in tasks like sentiment analysis, text classification, and named entity recognition.

However, transfer learning is not a silver bullet. The success of this method largely depends on the similarity between the original task and the new task. If the tasks are too dissimilar, transfer learning may not bring much benefit, and sometimes it may even impair performance. Therefore, it's important to evaluate the potential for transfer learning on a case-by-case basis, considering factors such as the similarity of the tasks, the amount of available data for the new task, and the complexity of the models involved.